Why “Governance” Is Too Small a Word for What AI Needs

Case Study: Klarna and the collapse of control

There’s a word we use too casually in policy circles. It makes us sound responsible, structured, intelligent. That word is governance.

For most of AI’s timeline, governance has been a convenient placeholder. Vague enough to include checklists, ethics statements, regulatory compliance, and the occasional pilot program. It creates the illusion of control while often delivering none.

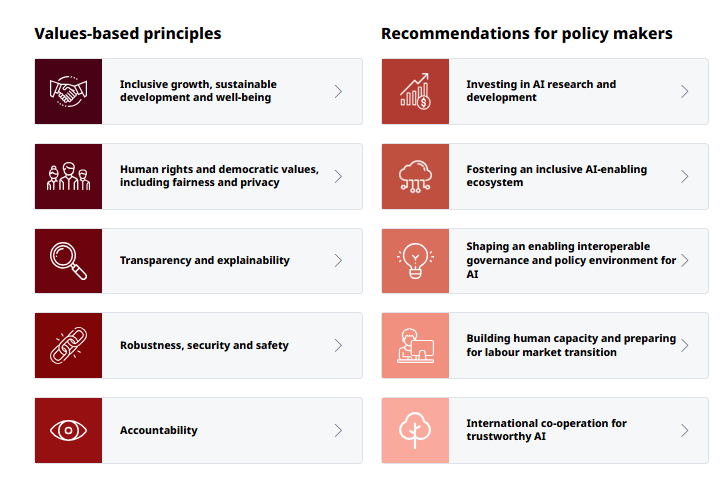

Let’s look at the (non-exhaustive and still growing) list of frameworks, standards, guidelines, best practices, models that fill or (ahem) govern this space:

OECD AI Principles: Five key principles for trustworthy AI adopted by many countries as a benchmark for responsible AI governance.

NIST AI Risk Management Framework (RMF): This framework emphasizes managing AI risks with a focus on security, transparency, and fairness throughout the AI lifecycle.

EU Ethics Guidelines for Trustworthy AI: These guidelines focus on technical robustness, privacy, transparency, diversity, non-discrimination, societal welfare, and accountability.

IEEE Ethically Aligned Design: Guidelines from the Institute of Electrical and Electronics Engineers for the ethical design and implementation of autonomous and intelligent systems.

ASEAN Guide on AI Governance and Ethics: Outlines seven guiding principles and recommends internal governance structures, risk assessment methodologies, operations management, and stakeholder communication for responsible AI use in Southeast Asia.

WHO Guidance on Ethics and Governance of AI for Health: Tailored for healthcare applications, focusing on safety, effectiveness, transparency, and data privacy.

Monetary Authority of Singapore’s FEAT Principles: For the finance sector, focusing on Fairness, Ethics, Accountability, and Transparency in AI and data analytics.

Safety First for Automated Driving (SFAFAD): A framework for automotive AI systems, emphasizing safety and reliability in automated vehicles.

These and scores of emerging legislative, regulatory and compliance frameworks for ethical and safe AI use create an appearance of “oversight” and “responsible management”. In reality, it does exactly the opposite.

The case of Klarna helps us see this clearly.

Klarna’s $40M AI Shortcut

Klarna replaced 700 human customer service reps with an AI assistant. The cost savings were broadcast as a win. The AI handled two-thirds of all incoming chats. Productivity soared.

Then came the drop.

Customers started complaining. Responses were off-mark. Frustration grew. Klarna backtracked. Despite adherence to industry best practices for automation and risk management, Klarna failed to deliver substantive oversight. The product failed.

Quietly, they started rehiring humans. As a fix. Because the governance model they used, and which most likely was a blend of some of the above, didn’t hold up in practice.

Klarna did many things “right”:

Disclosed that the agent was AI

Provided a human fallback option

Promoted ethical intent

And still, they missed the actual point.

The Problem Wasn’t Ethics or Compliance

It was a mismatch between design and expectation, speed and oversight, output and accountability.

This is where the word “governance” fails.

Governance assumes:

Human review is possible

Risk is predictable

Accountability flows up and down through clear lines

Failures are traceable, correctable, and bounded

None of that applied here. And it won’t in most real AI deployments. The moment a system goes live with thousands of user touchpoints, decision latency and ambiguity become structural.

What We Need Instead

Language should track function. If it doesn’t, we design for the wrong threat.

Klarna didn’t need more governance. They needed:

1. Cognitive Infrastructure Management

When you replace humans with probabilistic systems, you're not just swapping labor. You're altering how cognition gets distributed across the interface. Governance checks policy. Infrastructure manages load. Cognitive infrastructure absorbs the strain of misalignment between what users expect and what machines deliver. It helps solve the “explainability” conundrum. Klarna had no mechanism to detect cognitive misfires in real-time. The problem wasn't that the bot was wrong, it was that the system had no way to flag wrongness at scale.

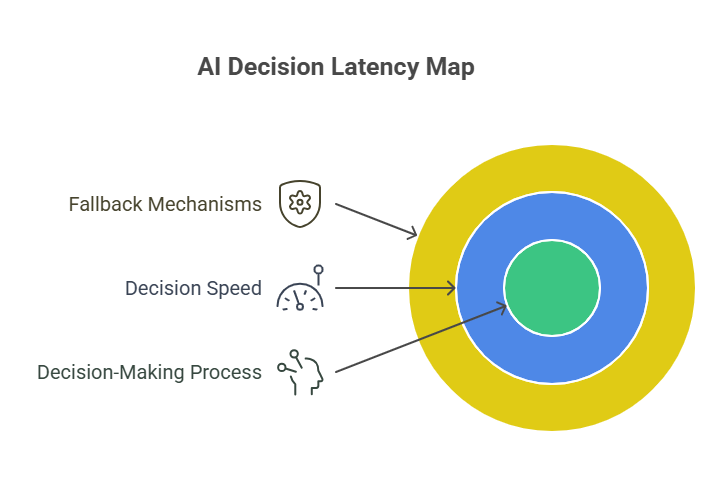

2. Decision Latency Mapping

No AI deployment should go live without a latency map that tracks the decisions that are made made, where they are made, at what speed, and with what fallback options. Klarna removed human judgment but didn’t replace it with conditional escalation protocols. The AI didn’t know when to defer. A sequencing flaw.

3. Perception Feedback Loops

Most governance frameworks stop at “was it fair” or “was it safe.” They rarely ask: Did the user feel served? Did they feel heard? Did they trust the exchange enough to repeat it? Klarna’s failure was perceptual, not mechanical. Their governance language had no tools to track sentiment decay in real-time.

Words Shape Systems

Governance isn't wrong. It's just not enough. And calling it that keeps us operating in the wrong mode. It signals that the problem is procedural when it’s actually architectural.

Klarna’s failure wasn’t in their ambition. It was in their framing.

They optimized for rules. What they needed was design authority (we’re keeping that for a later post).

Useful Next Steps for Any Leader Deploying AI at Scale

Replace “governance” with “operational cognition” in internal documents. Watch how it forces your teams to reframe their role.

Add a decision latency metric to every AI system you own. Not just how fast the AI answers, but how slow your org is to recover from a bad one.

Create a perception audit trail for every user interaction. Not just whether it worked—but whether it felt right.

Final Word

We’re entering a phase where AI doesn’t just process information. It shapes how people think, what they believe, and how they decide. Governance doesn’t cover that. It's too slow, too static, too narrow.

If your system can affect cognition, it isn’t governed. It must be commanded.

I’ve spent the last few years helping governments, impact funds and multilateral institutions think clearly when speed and structure collide. The lesson, again and again, is simple: the language we inherit often works against the systems we’re trying to build.

If this post reflected a question you’re asking or one you suspect you’ll be asked soon, feel free to stay connected!