The Missing Org Chart

Who actually governs AI?

There’s no single law, no single office, and no map. Yet, the decisions shaping how AI behaves, where it runs, and who gets to use it are being made every day.

Interestingly, it’s not the governments making them.

First, a contrast.

When OpenAI appeared before Congress in 2023, the headlines focused on safety, ethics, and guardrails. At the same time, behind the scenes, OpenAI quietly adjusted how GPT-4 handled political topics. That change reached more people than any court ruling that year. No waiting for permission, the model was updated and redrew the boundaries of what’s visible, discussable, and knowable online. We often think of governance as law. But when it comes to AI, law trails deployment.

A second, misunderstood but familiar example.

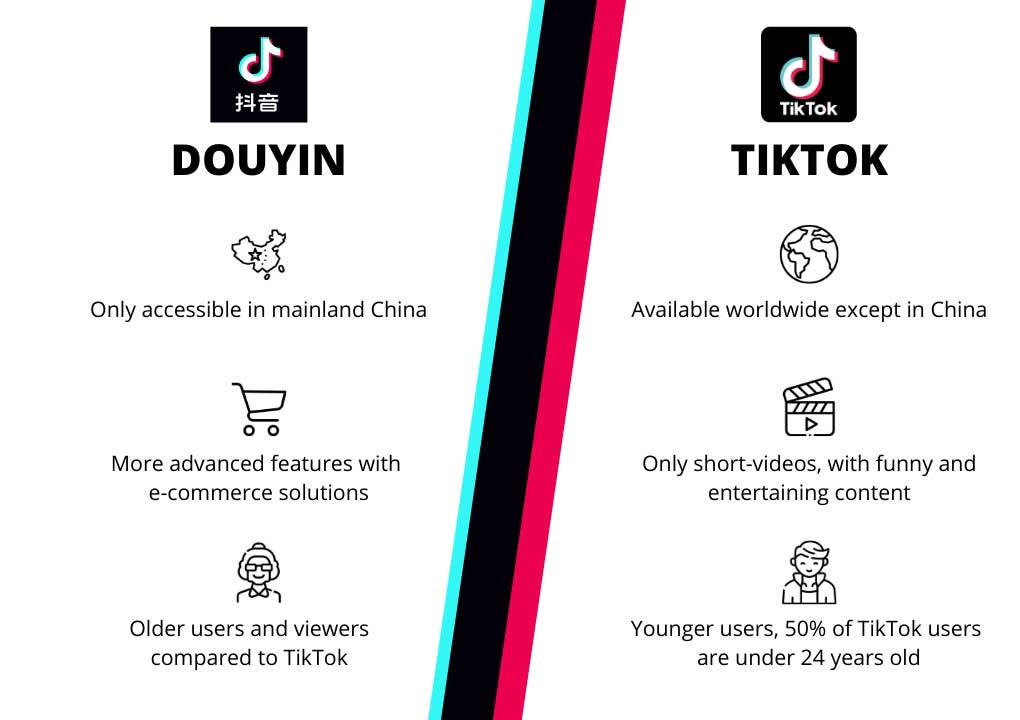

TikTok and Douyin are the same product with ByteDance as the company which created them. At least, until they’re not.

Same interface. Same engine. But in China, Douyin rewards educational content, limits usage for minors, and embeds civic campaigns. Globally, TikTok optimizes for engagement and revenue.

Two apps. Two jurisdictions. Two political theories about what technology is for.

Same code, different constraints. The algorithm doesn’t shape society. Governance does, and in this case, through design choices.

So where does AI governance really happen?

Not in parliaments. Not in roundtables. Not in frameworks.

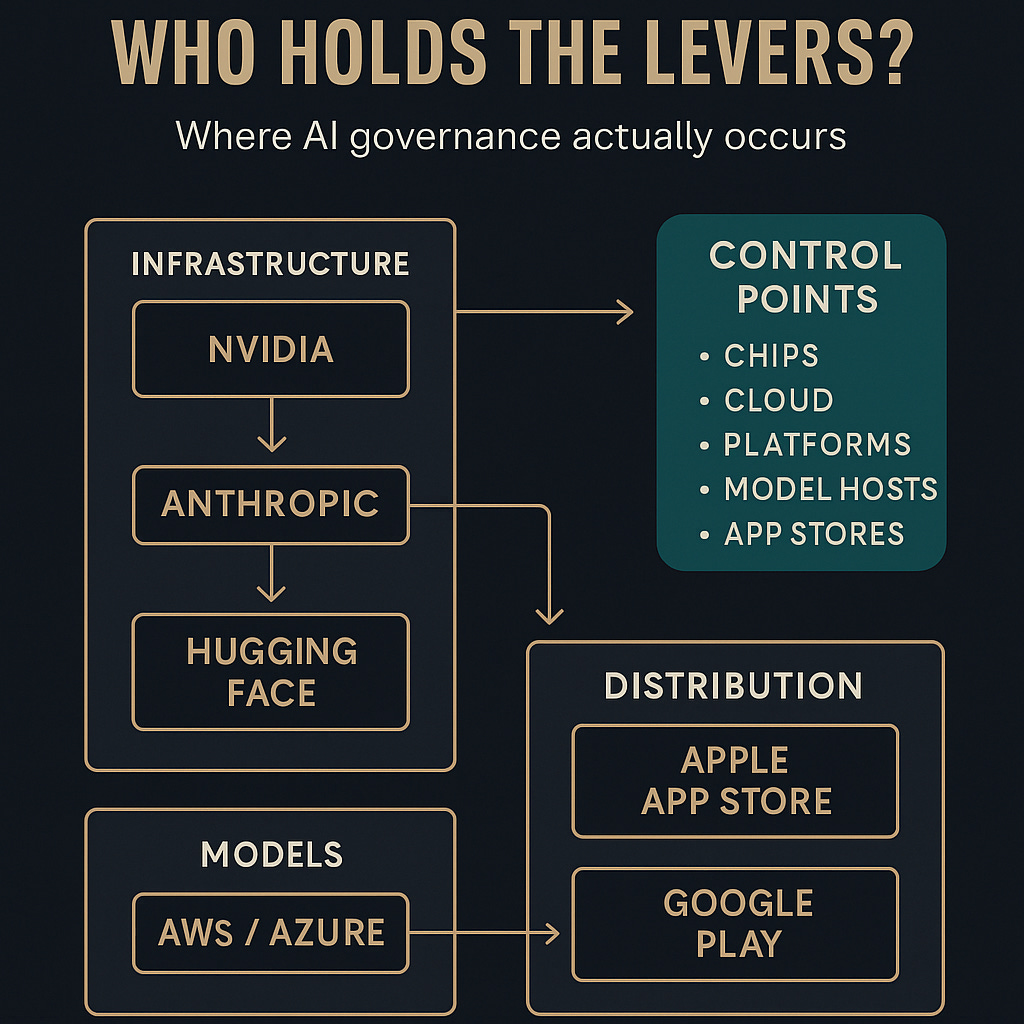

It happens at chokepoints. Places where a few actors decide how AI models will operate, and what’s possible for everyone else.

These chokepoints can be categorized in three tiers.

Tier 1: Infrastructure

Start with chips.

NVIDIA controls most of the hardware needed to train large AI models. When the U.S. restricted exports to China, it didn’t just affect trade, it reshaped the timeline of technological development for an entire bloc of countries. Here, its the chips that held the policy levers and leverage.

Then there’s cloud. AWS, Azure, and Google Cloud host nearly all commercial AI deployments. Their terms of service decide which applications live or die.

Tier 1 enforces governance by access, not law.

Tier 2: Models

Anthropic hardcodes values into its AI through something it calls “Constitutional AI.” These are not public documents. They’re internal design decisions that govern how models respond to billions of prompts.

Hugging Face, meanwhile, moderates which models show up in their repositories. A small team makes choices that quietly influence what most of the global open-source community builds on.

Tier 2, therefore, enforces governance through editorial control, scaled globally.

Tier 3: Distribution

Apple and Google approve which AI apps reach the public. If your system doesn’t meet their design rules, it doesn’t launch.

You could comply with every national regulation and still be blocked at the distribution layer. And most users would never know why.

Tier 3 enforces AI governance through distribution control brought in by the device and device OS manufacturers and then the country governments which would not want certain content or apps to be made available in their jurisdictions.

Why governments are late

Laws move at the pace of debate. AI moves at the pace of code.

The EU’s AI Act took four years to pass. In that time, we went from GPT-3 to GPT-4o, Claude and Gemini.

Legislation wasn’t built to chase architecture which is what it has to do now.

Why this matters more in emerging economies

Countries across the Global South are writing AI strategies and policies. Those documents or legislations often sit on top of tiers of the ecosystem they don’t control.

They depend on foreign models, foreign platforms, foreign chip supply. There’s no funding to build alternatives. No capacity to enforce standards. No leverage to shape the defaults.

So they inherit the system as-is. And try to regulate after the fact.

Two models worth watching

China governs AI like it governs everything else: through documentation, mandates, and tight integration with the state. Algorithms are registered. Data must be localized. Licenses are required.

Europe takes a different route. It doesn’t regulate development. It regulates access. If you want to sell, you comply.

Neither system is perfect. But both understand something others ignore:

Control doesn’t happen at endpoints. It happens at chokepoints.

What matters now

Short-term:

Who controls model weights

What platform rules are shifting

Where API access is tightening

Long-term:

Whether democracy can shape systems it doesn’t own

Whether governance becomes a design function—not just a legal one

Final note

There is no global governance model. There is no org chart.

But there is architecture. There are patterns. And there are people (largely unelected) making the decisions.

If you're not at the table, you’re inside the code.