Deconstructing MIT's State of AI in Business Report

Why GenAI has failed to generate the expected ROI and what can businesses do about it.

MIT's 2025 State of AI Report delivers a sobering reality check: 95% of organizations that poured $30-40 billion into generative AI last year saw zero measurable return. As I read through, I could not help but think of what is happening here. The technology isn’t failing - for the most part before it hallucinates. The story, therefore, is about the fundamental disconnect between deployment and integration and consequently, the anticipated transformation.

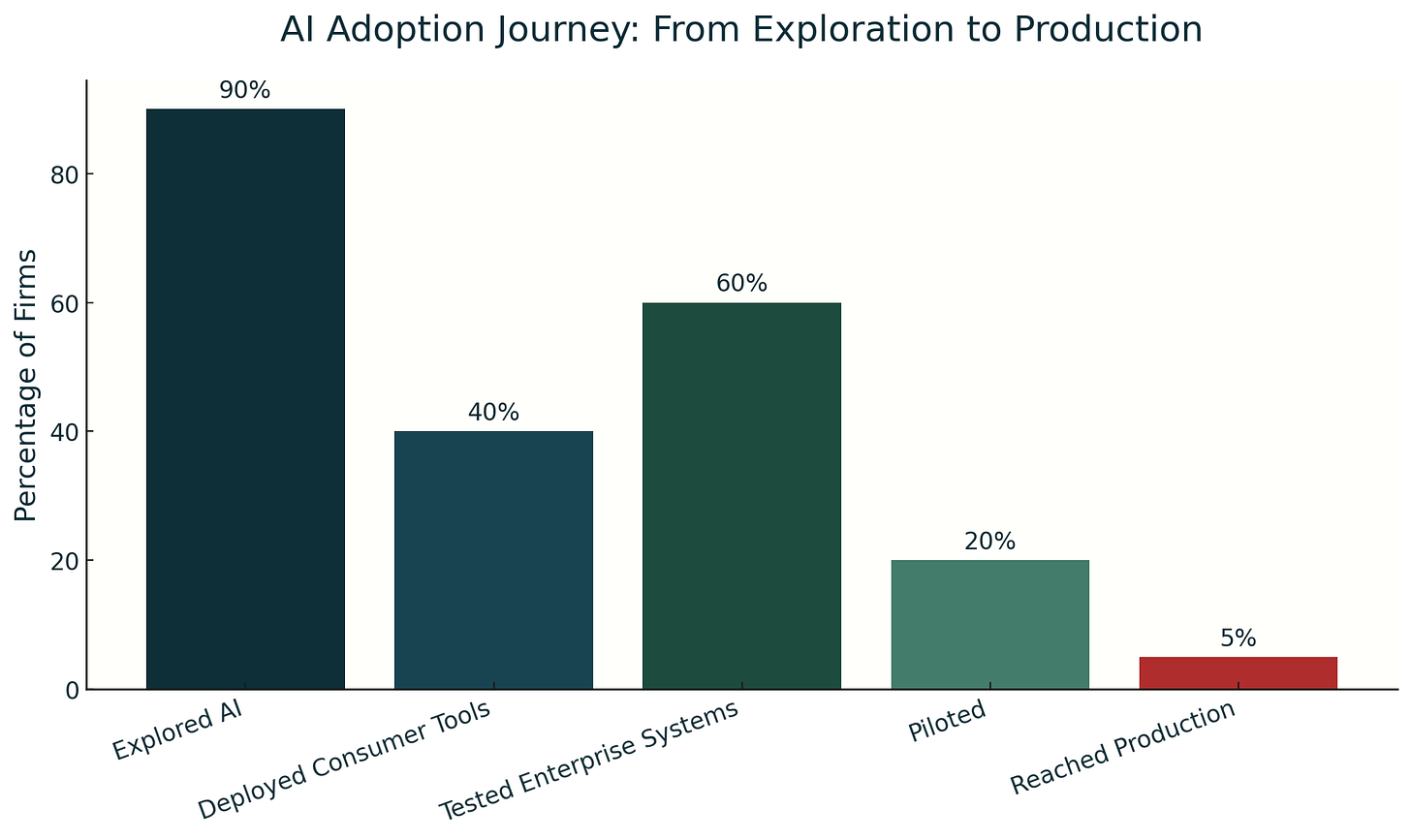

Enterprises poured $30-40 billion into generative AI last year. The data reveals a stark GenAI Divide separating a tiny group of winners from everyone else. Only 5% of organizations scaled AI tools into actual workflows. Just two sectors achieved structural transformation. The rest chased pilots that never crossed to production.

These numbers contradict the surface-level adoption narrative. While 90% of firms explored AI solutions and 40% deployed consumer tools like ChatGPT, meaningful integration remains elusive. The progression tells the story: 60% tested enterprise systems, 20% launched pilots, but only 5% reached production scale.

This pattern aligns with what I've previously analyzed about pilot-production crossover failures (see The Executive's Guide to AI that Doesn't Lose its Soul). Corporate enthusiasm, disconnected from strategic objectives, consistently produces low-impact outcomes. Organizations see productivity boosts for individual tasks, but these tools rarely move the P&L needle. MIT calls this the "pilot-to-production chasm", a gap that enthusiasm alone cannot bridge.

Interestingly enough, the industry projections tell a different story. Capgemini, for example, sees rapid transformation in operations and growth. McKinsey projects $2.6-4.4 trillion in global value creation. KPMG notes efficiency gains across tech firms (as we’ve seen with the vibe coding tools like Cursor or Bolt). These optimistic views assume that capability automatically translates to results results. This is a fundamental attribution error that ignores implementation reality. It’s just like the school days when you argued for a better laptop or PC to get better grades when you and everyone knew that a major chunk of its time would be spent playing Call of Duty.

Consequently, the 95% failure rate proves the point. Deployment without integration, and a coherent long-term strategy wastes billions.

So what’s the so what? Well, the real barriers run deeper than tech and that is why most explanations miss the mark entirely. Model quality is not the bottleneck. Legal risks don't explain the divide. Data concerns fall short of the full picture. The core lies in learning and organizational fit.

Most enterprise AI tools don’t remember past interactions or understand ongoing context. This causes them to make the same mistakes repeatedly because they can’t learn from what happened before. For example, at my previous employer, several internal chatbots designed to support business development never reached maturity. The problem wasn’t the chatbot’s ability to generate responses but that the integration stopped short. There was no system in place for the chatbot to access or update company knowledge or customer histories. Each time a user interacted, the bot needed all the information re-entered. This lack of memory makes these tools reset after every session, making them blind to accumulated knowledge. Consumer AI systems like ChatGPT handle flexible and open-ended tasks well because they respond one-off to varied prompts. But enterprise AI fails when it must operate within strict, repeatable workflows that demand continuity, like tracking customer conversations or managing processes with many steps. One executive in the MIT report noted that chatbots could produce a first draft but consistently ignored client preferences over time (a trend I had experienced myself and sought to rectify). For enterprise AI to be useful beyond novelty, it must remember past interactions, adapt continuously, and integrate deeply with existing organizational data and workflows. Without these capabilities, AI tools remain limited, like toys that entertain but don’t deliver real value.

Learning from embedded success models

The organizations crossing the pilot-to-production divide don't treat AI as a separate project. They embed AI expertise directly within teams, redesigning workflows and building systems that evolve through actual use. This means AI specialists work alongside teams inside the organization, not as external consultants delivering reports.

Palantir's forward-deployed engineers exemplify this approach. They don't just drop off software and leave. They embed within client teams, study existing workflows in detail, and identify where AI creates the most value. Then they build AI systems that evolve based on real-world usage and feedback. OpenAI uses a similar model with a steep $10 million entry fee designed to break "pilot purgatory." This fee creates immediate accountability, ensuring pilots rapidly convert to production scale. It eliminates endless internal debates and unproductive workshops, focusing resources exclusively on turning experiments into working systems.

This approach spans industries and sectors. Success requires shifting from reports and demos to embedding teams that redesign workflows with AI as a core component. The technology stops being a sideline experiment and becomes integral to the organization's operating system.

This embedded approach also addresses what I've observed firsthand and the report documents: shadow adoption. Workers, frustrated by stalled official AI efforts, seek out personal tools like ChatGPT when company-approved solutions fail to deliver. Rather than treating this as a compliance problem, leaders should recognize it as free market research revealing urgent workflow needs.

This underground usage shows real demand for AI tools that fit everyday work. Organizations that succeed respond by training employees to work effectively with AI assistants that learn and improve over time. They change procurement processes to choose AI tools that grow with their needs and empower managers to lead human-AI collaboration.

This human-AI partnership bridges the GenAI Divide. AI tools must have memory systems to learn from ongoing feedback. Workflows must be fundamentally redesigned to allow people and AI to work as partners, not separate silos. Vendors meeting these integration requirements scale quickly. Those that don't end up stuck in endless pilots, never moving to real deployment.

The path forward

Crossing the GenAI Divide requires alignment before action. Organizations must map AI initiatives to core strategic objectives first—whether revenue growth, operational efficiency, or sustainability targets. Without this north star alignment, efforts scatter across disconnected pilots that impress in demos but crumble under operational pressure.

The measurement framework must span financial, customer, and process dimensions. Embed technical experts who can redesign workflows for human-AI collaboration. Train teams for adaptive partnering rather than tool usage. Monitor broader risks including talent competition and regulatory evolution. Together, these steps build an AI-enabled system resilient to evolving operational, talent, and regulatory landscapes.

This creates positioning for the emerging agentic future. Organizations that commit to this integrated approach now will transform today's $40 billion waste into tomorrow's competitive advantage.

The framework is clear: treat AI as operating system evolution, not feature addition. Measure integration success against strategic purpose. Adapt continuously as AI capabilities reveal new operational possibilities.

The GenAI Divide separates organizations that deploy technology from those that integrate intelligence. Which side will your organization choose?

If your organization faces these AI integration challenges, my AIG-OS™️ framework bridges the pilot-to-production gap. Through rapid readiness assessments, governance implementation, and embedded advisory support, I help leaders align AI with strategic objectives while ensuring compliance and measurable impact. The approach delivers 50-70% faster AI approvals and sustained competitive advantage. For a confidential discussion on crossing the GenAI Divide, reach out directly at syed@shehryr.com or connect with me on LinkedIn.